[…] ** You should always consider ROW data_compression (https://logicalread.com/sql-server-2008-table-compression-mb01/#.WVGY7bpFyqk) […]

The best reason(s) for not trying index compression in SQL Server® may be because you are using Standard Edition (or less) and/or your version is prior to SQL Server 2008—at least, that is my current thinking. For a properly sized and tuned server, there is almost no downside. But, let’s look further into how I arrived at that conclusion.

Object compression was a new feature for SQL Server 2008 Enterprise Edition and higher, and is now available for SQL Server 2016 SP1 Standard Edition. This allows you to have row or page compression for clustered and non-clustered indexes.

Table Compression Details:

Row compression essentially looks at each fixed width column to see if data within can be compressed; it does cost a few extra bits. Page compression is the same as row compression, but also looks for data commonality to alias much like the common file compression algorithms only at the page level. Both come at cost of increased CPU “usage.” Data is compressed/uncompressed in the processor L2 Cache, not data buffers (RAM).

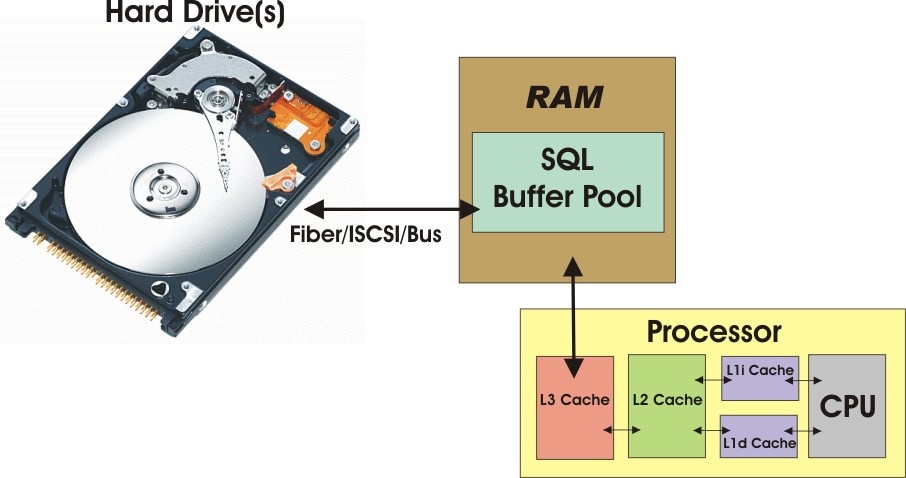

Figure 1: Data Flow From Hard Drive to Processor (© 2017 | ByrdNest Consulting)

With index compression, compressed data resides on the hard drive(s), SQL Buffer Pool, and the Processor’s L3 and L2 cache. Depending on connectivity, data is brought into RAM and stored in the SQL Buffer Pool as needed. In the L2 Cache, data is decompressed/compressed as needed. With row compression, data is decompressed/compressed on a row basis. With page compression (if that page has page compression), the entire page is decompressed/compressed in L2 Cache. In page compression, if a page is not “full,” it will most likely just have row compression. This can happen as data is added, deleted, or when there are page splits. In any case, when data is compressed, you can have more of it in the SQL Buffer Pool and subsequently more in L3 Cache and L2 Cache. Latency is worse between the hard drive and RAM (physical reads), but also needs to be considered between the SQL Buffer Pool and L3 Cache and L2 Cache. Anything that lets you “cram” more data into each of these “containers” may improve performance.

So, what is the cost of page or row compression? Obviously there is a CPU cost (more cycles). Turns out that for years, there have been some seldom-used instructions in the chipset. These instructions process multiple streams of data at one time in one instruction cycle. An analogy might be changing all four tires (NASCAR® country talking) simultaneously. The official name of this group of instructions is SIMD (Single Instruction, Multiple Datastreams) and they operate at register speeds (even faster than main memory). More details can be found at http://researcher.watson.ibm.com/researcher/files/us-lefurgy/micro30.net.compress.pdf. The performance effect is discussed below.

Table Compression Size Estimation:

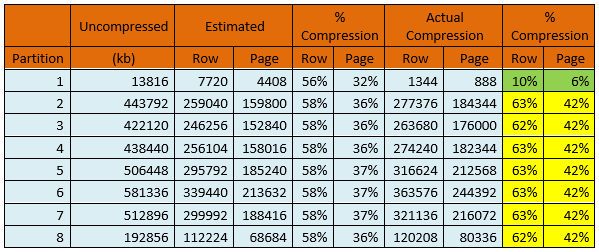

To Microsoft’s credit, they did give a tool to evaluate the potential for compression savings on either of these two objects. sp_estimate_table_compression_savings is a stored procedure that returns current size and estimated compression for a specified table (or index) and a specified compression type (row or page). Applying this sproc to a partitioned table and then comparing to actual compression sizes is shown in the table below:

Table 1: Estimated Data Compression (© 2017 | ByrdNest Consulting)

Although Microsoft® doesn’t explain it well, the procedure samples a portion of the table (and partitions) much like index statistics to arrive at an estimate of how well the compression does. Except for partition 1, the actual compression was not as good as the estimated compression for this case. This seems typical for the several cases I tested. I’m not sure why, but the estimated size results just seemed optimistic.

Table Compression Performance:

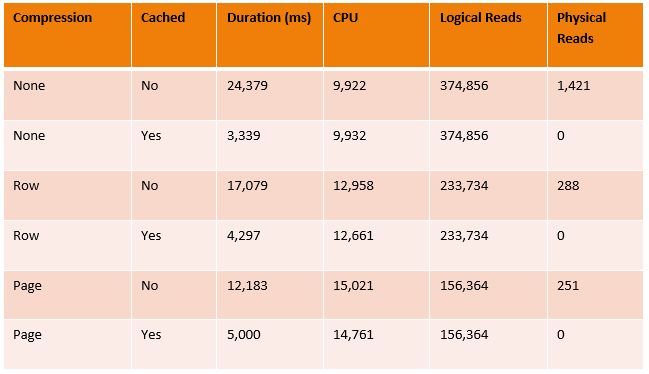

Below is a chart with statistics gathered from a typical SELECT query using both SET STATISTICS ON and Profiler:

Table 2: Comparison of Performance Data for No, Row, Page Compression (© 2017 | ByrdNest Consulting)

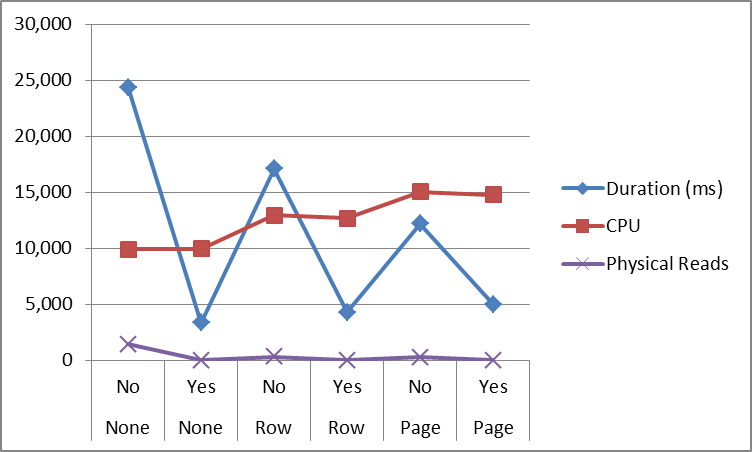

Note that for data not cached, physical reads decreased as compression increased. Correspondingly, durations decreased for data not cached (as expected), but slightly increased (as did CPU times) for data being cached. Logical reads were the same whether data was cached or not but decreased with compression. Here are the same results in graphical form:

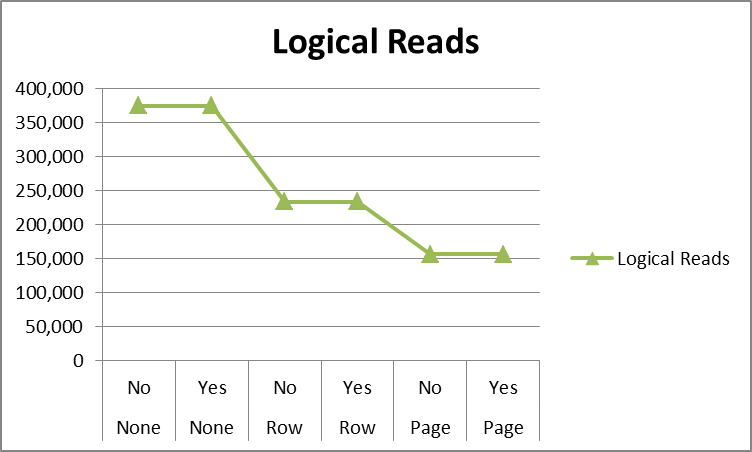

Figure 2: Typical Logical Reads with/without compression (© 2017 | ByrdNest Consulting)

Where the No/Yes in the horizontal axis refers to data cached or not cached and the None/Row/Page refers to the type of table compression.

Note that the logical reads decrease as the compression is increased; data cached or not does not matter (as expected).

Figure 3: Performance with/without compression (© 2017 | ByrdNest Consulting)

The horizontal axis is the same as described above.

Note that CPU duration increases (as expected) with compression, and physical reads with compression show a decreasing trend. Duration decreases with data not cached as compression increases, but increases slowly (increased CPU usage) when data is already cached.

The data in these graphs came from a production OLTP business system. The trends shown are very typical for almost any time of TSQL query (create, update, delete, get).

I rarely use page compression and when I do it is for Data Warehouses on partitioned tables for the partitions with “older” data. The overall cost of page compression can be very expensive (CPU cycles) for rapidly changing data and while for reads can be quite beneficial performance-wise it just costs too much for data modification statements.

Conclusion:

Where the results shown above are typical for all the cases I’ve investigated, there may be exceptions and scenarios where performance could be less. But, to date, I have not found these exceptions in the business world and always row compresses all indexes in both OLTP and OLAP databases. But as Microsoft constantly states: Test! Test! And test more! The functionality of keeping the data compressed all the way to the processor L2 Cache is just a great opportunity that you need to consider. Good luck!

Nice article. Thanks for the info!