The ASM instance can now be set up as a local ASM instance on the database server or as an ASM cluster. An ASM instance can manage the following files for all of the instances on each cluster node: control files, data files, tempfiles, spfiles, redo logs, archive logs, RMAN backups, Data Pump dumpsets, and Flashback logs. The database does not need to be set up as an RAC database to use ASM. In fact, ASM can be used for a single database install.

There are a few alternative ways to set up ASM for the environment, and the next couple of figures will demonstrate the options. Depending on the configuration, ASM can be more fault tolerant, provide a quicker failover, and support various database and application environment configurations. In previous releases, when allocating the disks to ASM, there was still a risk of a non-Oracle process overwriting something on that disk. The current version of ASM rejects non-Oracle I/O and protects the disks from being overwritten.

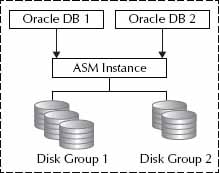

Starting with Figure 1, you’ll see the different options to use ASM. The first option is a database instance with an ASM instance. One ASM instance can manage the files for more than one database instance on that server. Each of the databases for that ASM instance on that server has access to all of the disk groups, which are the available disks that have been allocated by creating groups.

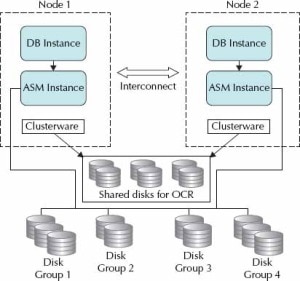

As you can see in Figure 2, the difference with ASM instances in the RAC environment is that they are also instances that are available for failover; this means that RAC components need to be available to manage the failover. An ASM instance is still created on each node, but the instances manage the disk groups and files across all of the nodes. The ASM instances manage the client files and ship the data or client information to the database server.

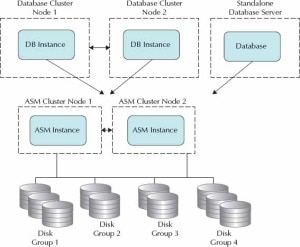

Figure 3 does show the option to have the database server directly connect to the ASM cluster. Here, the ASM instance is no longer on the database server and through ASM Listeners and network connections access is available to ASM. The ASM Listeners are registered as remote listeners and can load balance across the ASM clusters.

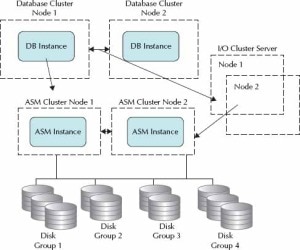

There is another option to have an I/O Server that can deliver the file data and export the disk groups as a service to the database servers. This is an indirect connection from the database server to the ASM cluster through the I/O Server instance, as shown in Figure 4.

Any of the architectures provide dictionary tables to manage and get information about the ASM instance and the clients. For example, V$ASM_CLIENT provides the information about the database clients that are using that ASM instance. You can use the V$ASM_CLIENT and the ALTER SYSTEM command to relocate a client to another node gracefully to prepare for maintenance. Also, the ASM instance is still a small database instance because it is mostly composed of the memory structures and not physical data. The ASM instance provides the framework for managing disk groups, which contain several physical disks and can be from different disk formats such as a raw disk partition, LUNS (hardware RAID), LVM (redundant functionality), or NFS (can load balance over NFS systems and doesn’t depend on the OS to support NFS). However, the advantage of ASM is that it uses raw devices that bypass any OS buffering.

The ASM instance can be created on installation of the grid software or by using the database configuration assistant (DBCA). The name of the instance normally starts with +ASM, and then the Oracle Cluster Synchronization Server (CSS) must be configured. This service is added with the localconfig script, which will add the OCR repository that is necessary for ASM. Also, there is a new security role to log in as administrator of the ASM instance, SYSASM. The OS group of OSASM goes along with this new role.

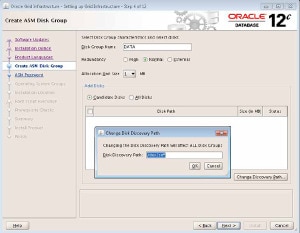

To get started, the grid infrastructure needs to be installed, as shown in Figure 5. If you want to create a database using ASM, the ASM instance needs to be created first. However, you can also migrate an existing database to ASM, allowing the instance to be created for that migration as well.

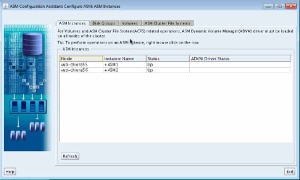

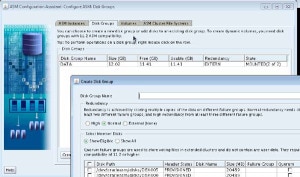

Commands can be used to add disks and create disk groups for the ASM instance. The ASM Configuration Assistant (ASMCA) is also available to add disk groups and volumes for the filesystem. Figure 6shows the ASMCA, which can stop and start an ASM instance, and Figure 7 shows the creation of disk groups using ASMCA. This is an easy-to-manage ASM instance and a clustered filesystem.

In order for the ASM instance to discover the available disks, an initialization parameter is used: ASM_DISKSTRING. Other parameters include ASM_DISKGROUPS, which specifies which files are managed by ASM, and ASM_POWER_LIMIT, which is the default value for disk rebalancing. INSTANCE_TYPE is set to indicate that the instance is an ASM instance and not a database:

Disk groups can be created during instance creation. After the instance is created, sqlplus is used to start up ASM. SRVCTL is a good way to manage the ASM cluster, and CRSCTL can also assist with status information about the ASM instance.

Friend,

we have a question, we would like to know if it is possible for an Oracle DataBase 12C Single Instance server install ASM?

Yes, you can use ASM on a Single Instance.

Hi team, thanks for your sharing. I have a question here. We both know that with oracle rac 12c, ASM Instance do not need to run on the same host with DB instance. So the db instance will connect to the remote ASM instance via TCP instead of IPC in previous version. ASM also have ASM listener which running on the same host with ASM instance. So how can DB instance know where ASM Instance is running on ??

You say that “The ASM Listeners are registered as remote listeners and can load balance across the ASM clusters.” I do not understand this statement. Do you mean that we change the remote_listener parameter in DB Instance ?