Oracle Real Application Clusters (RAC) provides a database environment that is highly available as well as scalable. If a server in the cluster fails, the database instance will continue to run on the remaining servers or nodes in the cluster. With Oracle Clusterware, implementing a new cluster node is made simple. RAC provides possibilities for scaling applications further than the resources of a single server, which means that the environment can start with what is currently needed and then servers can be added as necessary. Oracle 9i introduced the Oracle Real Application Clusters; with each subsequent release, management and implementation of RAC have become more straightforward, with new features providing a stable environment and performance improvements. Oracle 12c brings additional enhancements to the RAC environment, and even more ways to provide application continuity.

In Oracle 11g, Oracle introduced rolling patches for the RAC environment. Previously, it was possible to provide ways to minimize downtime by failing over to another node for patching, but it would still require an outage to finish patching all of the nodes in a cluster. Now with Oracle 12c, the patches can be applied, allowing other servers to continue working even with the non-patched version. They get applied to the Oracle Grid Infrastructure Home, and can then be pushed out to the other nodes. Reducing any outages, planned or unplanned, in companies with 24×7 operations is key. The Oracle Clusterware is the piece that helps in setting up new servers and can clone an existing ORACLE_HOME and database instances. Also, it can convert a single-node Oracle database into an RAC environment with multiple nodes.

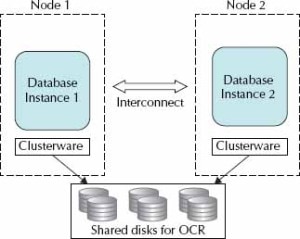

The RAC environment consists of one or more server nodes; of course, a single server cluster doesn’t provide high availability because there is nowhere to fail over to. The servers or nodes are connected through a private network, also referred to as an interconnect. The nodes share the same set of disks, and if one node fails, the other nodes in a cluster take over.

A typical RAC environment has a set of disks that are shared by all servers; each server has at least two network ports: one for outside connections and one for the interconnect (the private network between nodes and a cluster manager). The shared disk cannot just be a simple filesystem because it needs to be cluster-aware, which is the reason for Oracle Clusterware. RAC still supports third-party cluster managers, but the Oracle Clusterware provides the hooks for the new features for provisioning or deployment of new nodes and the rolling patches. The Oracle Clusterware is also necessary for Automatic Storage Management (ASM), which will be discussed in the latter part of this chapter.

The shared disk for the clusterware comprises two components: a voting disk for recording disk membership and an Oracle Cluster Registry (OCR), which contains the cluster configurations. The voting disk needs to be shared and can be raw devices, Oracle Cluster File System files, ASM, or NTFS partitions. The Oracle Clusterware is the key piece that allows all of the servers to operate together.

Without the interconnect, the servers do not have a way to talk to each other; without the clustered disk, they have no way to have another node to access the same information. Figure 1 shows a basic setup with these key components.

Leave a Reply