In addition to creating new indexes to improve performance, you can improve database performance with additional schema optimizations. These optimizations include using specific data types and/or column types. The benefit is a smaller disk footprint producing less disk I/O and results in more index data being packed in available system memory.

Data Types

Several data types can be replaced or modified with little or no impact to an existing schema.

BIGINT vs. INT

When a primary key is defined as a BIGINT AUTO_INCREMENT data type, there is generally no requirement why this datatype is required. An INT UNSIGNED AUTO_INCREMENT datatype is capable of supporting a maximum value of 4.3 billion. If the table holds more than 4.3 billion rows, other architecture considerations are generally necessary before this requirement.

The impact of modifying a BIGINT data type to an INT data type is a 50 percent reduction in per row size of the primary key from 8 bytes to 4 bytes. The impact is also not just for a primary key; all foreign keys that are defined as BIGINT can now be modified to INT. This savings can significantly reduce the space required for indexes in a heavily normalized database. For a more detailed explanation, see http://ronaldbradford.com/blog/bigint-v-int-is-there-a-big-deal-2008-07-18/.

TIP

The change of BIGINT to INT UNSIGNED for an AUTO_INCREMENT column can be one of the best immediate schema improvements for a database server with limited memory and a heavily normalized data model.

DATETIME vs. TIMESTAMP

Is the requirement for recording a date/time value an epoch value—that is, the number of seconds since 1/1/1970? If the value being stored is only an epoch value, then a TIMESTAMP column supports all necessary values. A DATETIME column supports all possible date/time values. A DATETIME data type is 8 bytes, and a TIMESTAMP data type is 4 bytes.

The disadvantage of using a TIMESTAMP is the implied DEFAULT 0, which is incompatible with any related SQL_MODE settings that disable zero date settings. The TIMESTAMP data type also does not support a NULL value. This column type is ideal for creating the date and time of a created or an update value for a row when a value always exists.

ENUM

MySQL provides the ENUM data type, which is ideal for static code values. For example, when recording the values for gender, you could define a column as either of the following.

gender1 VARCHAR(6) NOT NULL gender2 ENUM (‘Male’,’Female’) NOT NULL

There are three benefits of using the ENUM data type:

- An ENUM column provides additional data integrity with an implied check constraint.

- An ENUM column uses only 1 byte for up to 255 distinct values.

- The values of an ENUM column are better for readability. For example, if you have a status field, the use of an ENUM is compact in data size and provides a meaningful description of the column value.

status1 CHAR(1) NOT NULL DEFAULT ‘N’, // ‘N’,’A’,’X’

status2 ENUM (‘New’,’Active’,’Archived’) NOT NULL DEFAULT ‘New’

Historically, the impact of using an ENUM was the database dependence of changing the range of values when a new value was required. This was an ALTER DDL statement, which is a blocking statement. Starting with MySQL 5.1, adding a value to an ENUM column is very fast and unrelated to the size of the table.

NULL vs. NOT NULL

Unless you are sure that a column can contain an unknown value (a NULL), it is best to define this column as NOT NULL. Frameworks, for example Ruby on Rails, are notorious for not defining mandatory columns as NOT NULL. When the column is defined within an index, there is an improved size reduction and simplification of index processing, as no additional NULL conditions are required. In addition, having NOT NULL places an extra integrity constraint on the data in the column, ensuring all rows have a value for the column.

Implied Conversions

When you are choosing an indexed data type for table joins, it is important that the data type is identified. Implied type conversion can be an unnecessary overhead. For numeric integer columns, ensure that SIGNED and UNSIGNED is consistent. For a variable data type, the added complexity includes the character set and collation. When you are defining indexed columns for table join conditions, ensure that these match. A common problem is an implied conversion between LATIN1 and UTF8 character sets.

Column Types

Several types of data are commonly defined with inefficient column types. Changing the column’s data type can result in more efficient storage, especially when these columns are included within indexes. Following are a few common examples.

IP Address

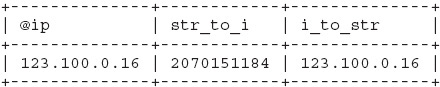

An IPv4 address can be defined as an INT UNSIGNED data type requiring 4 bytes. A common occurrence is a column definition of VARCHAR(15), which averages 12 bytes in size. This one improvement can save two-thirds of the original column data size. The INET_ATON() and INET_NTOA() functions manage the conversion between an IP string and a numeric value. Here is an example:

mysql> SET @ip='123.100.0.16';

mysql> SELECT @ip, INET_ATON(@ip) AS str_to_i,

-> INET_NTOA(INET_ATON(@ip)) as i_to_str;

This technique is applicable only to IPv4. With IPv6 becoming commonly acceptable, it is important that you also store these 128-bit integer values efficiently with a BINARY(16) data type and not use a VARCHAR data type for the human-readable format.

MD5

Storing a MD5 value of any value as a CHAR(32) column is a normal practice. If your application is using VARCHAR(32) you have additional unnecessary overhead of the length of the string for every value. This hexadecimal value can be stored more efficiently using the UNHEX() and HEX() functions and storing the data in a BINARY(16) data type. Doing this conversion reduces the per-row space consumed from 32 bytes to 16 bytes.

The following example shows the size of the MD5 and MD5 compressed column:

mysql> SET @str='somevalue'; mysql> SELECT MD5(@str), -> LENGTH(MD5(@str)) AS len_md5, -> LENGTH(UNHEX(MD5(@str))) as len_unhex;

This principle can be applied to any hexadecimal value—for example, if an index is defined for a hash of all columns.

Leave a Reply