Simply understanding a nine-step tuning process is not enough to be able to make a system work efficiently. You need a formal, quantitative way to measure performance. You also need some specific vocabulary to avoid any possible misunderstanding. The vocabulary list may vary somewhat, but the following terms are fundamental:

- Command. An atomic part of the process (any command on any tier).

- Step. A complete processing cycle in one direction (always one-way) that can be either a communication step between one tier and another or a set of steps within the same tier. A step consists of one or more commands.

- Request. An action consisting of a number of steps. A request is passed between different processing tiers.

- Round-trip. A complete cycle from the moment the request leaves the tier to the point when it returns with some response information.

Under the best circumstances (when you can acquire complete information about every step), the concept of a round-trip is redundant. In the real world, though, getting precise measurements for all nine steps is extremely complicated because there are two completely different kinds of steps:

- Steps 1, 3, 5, 7, 9—Both the start and end of the step are within the same tier and the same programming environment.

- Steps 2, 4, 6, 8—The start and end are in different tiers.

Having entry points in different tiers means that if time synchronization does not exist between tiers, making time measurements is useless. This problem can be partially solved in closed networks (such as military or government-specific ones), but for the majority of Internet-based applications, a lack of time synchronization is a roadblock because there is no way to get reliable numbers.

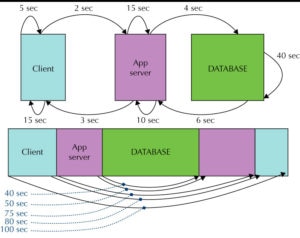

The concept of a “round-trip” enables us to get around this issue. See Figure 1 below.

FIGURE 1. Round-trip timing of the nine-step process

As you can see, a full request-response cycle can be also represented as five nested round-trips, one within the other. Here are their descriptions from the innermost one to the outermost one:

1. From the moment that a request is accepted in the database to the moment when a response is sent back from the database (start of the PL/SQL block to end of the PL/SQL block)—40 seconds in Figure 1.

2. From the moment that a request was sent to the database to the moment that a response was received from the database (start of JDBC call to the end of JDBC call)—50 seconds (40 + 4 + 6).

3. From the moment that a request was accepted to the moment that a response was sent back to the client machine (start of processing in the servlet to end of processing in the servlet)—75 seconds (50 + 10 + 15).

4. From the moment that a request was sent to the application server to the moment when a response was received from the application server (start of servlet call to the end of servlet call)—80 seconds (75 + 2 + 3).

5. From the moment that a request was initiated (user clicked the button) to the end of processing (a response is displayed)—100 seconds (80 + 15 + 5).

Now there is a “nested” set of numbers that is completely valid because all numbers are measured on the same level. This allows calculation of the following:

- Total time spent between the client machine and the application server both ways (Step 2 + Step 8) = round-trip 4 (80 seconds) minus round-trip 3 (75 seconds) = 5 seconds.

- Total time spent between the application server and the database both ways (Step 4 + Step 6) = round-trip 2 (50 seconds) minus round-trip 1 (40 seconds) = 10 seconds.

Although there is no way to reduce this to a single step, it is significantly better than no data at all, because two-way timing provides a fairly reliable understanding of what percentage of the total request time is lost during these network operations. These measurements provide enough information to make an appropriate decision about where to utilize more tuning resources, which is the most critical decision in the whole tuning process.

Leave a Reply