Upgrading to GI has the same requirements for a cluster node as a new install, so you will need to get a SCAN IP, and make sure the kernel parameters and O/S packages meet the new requirements. The only exception to this is that your existing raw devices can be used for OCR and voting files. An upgrade to GI installs your software into a completely new home, and this is known as an out-of-place upgrade. This allows for the current software to run until the rootupgrade.sh (similar to root.sh) is run on the local node. The upgrade can also be done in a rolling manner such that Oracle Database 11g Release 2 GI can be running on one node while a previous version is running on other nodes. This is achieved because 11g Release 2 will run as though it were an old version until such time as all nodes in the cluster are upgraded. Then and only then will the CRS active version be set to 11.2 and the cluster software assume its new version.

NOTE

This functionality is provided to allow rolling upgrade, and different versions of the clusterware should not be running in the cluster for more than a short time. In addition, the GI software must be installed by the same user who owns the previous version CRS software.

Things to note for ASM

If you have ASM, then ASM versions prior to 11.1.0.6 cannot be rolling upgraded—which means although you can do a rolling upgrade with the clusterware stack, at some point all ASM instances (pre 11.1) must be down to upgrade ASM to Oracle Database 11g Release 2. It is possible to choose not to upgrade ASM at GI upgrade time, but this is not advisable because 11g Release 2 was built to work with 11g Release 2 ASM. It is better to take the downtime at upgrade instead of having to reschedule it later or encounter a problem and have to shut down in an emergency. Of course, any databases using ASM will be down while ASM is unavailable.

NOTE

For an 11.1.0.6 ASM home to be rolling upgraded to 11g Release 2, the patch for bug 6872001 must have been applied to that home.

Upgrade paths

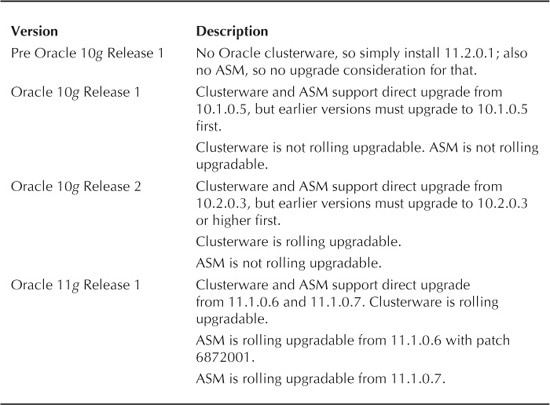

The upgrade paths available for Oracle Clusterware are shown in Table 1.

The actual upgrade

The upgrade is completed using the normal installer, and rather than go through the screens again, we will mention a few important points here. The upgrade will involve the same screens that appear in a normal installation.

CVU should be used prior to upgrade to ensure that stage –pre crsinst requirements are met.

The installer must be run as the same user who owned the clusterware home that we are upgrading from.

The installer should find existing clusterware installed and select Upgrade on the initial screen. If it does not, stop, because this means there is a problem.

If the installer finds an existing 11.1 ASM installation, it will warn that upgrading ASM in this session will require that the ASM instance be shut down. You should shut down the database instances it supports. rootupgrade.sh will shut down the stack on this node anyway, so this is more of interest if you cannot rolling upgrade ASM, as per Table 1.

TABLE 1. Upgrade Paths to Oracle 11 g Release 2 Grid Infrastructure

-

You do have the option of deselecting the Upgrade Cluster ASM checkbox on the Node Selection Screen when ASM is discovered; however, it is recommended that you upgrade ASM at the time of clusterware upgrade.

-

The script to configure and start GI is rootupgrade.sh, not root.sh.

-

Rootupgrade.sh must be run on the installation node first to completion before it is run on any other nodes. It can then be run on all but the last node in parallel to completion. The last node to be upgraded must again be run on its own. If this is not done correctly, some nodes will need to be deconfigured and rerun in the correct order.

-

After the root scripts are run, asmca will upgrade ASM if that option was chosen. It will shut down all instances and upgrade if the old ASM home is on a shared disk and/or the ASM version is prior to 11.1.0.6. If ASM 11.1.0.6 or 11.1.0.7 is used, it will attempt to do a rolling upgrade of ASM to avoid a complete loss of service.

-

Nodes with pre 11.2.0.1 databases running in the cluster will have their node numbers pinned automatically when upgrading to 11.2.0.1 and will be placed in the generic pool as older versions of ASM and RDBMS software require administrator management.

Leave a Reply